to hyperdata.it

Not Climbing : Marlin Spike

As a kid I was the one the family would turn to, to undo knots. Having played with various cords & ropes recently, after loading with a middle-aged man, just nope.

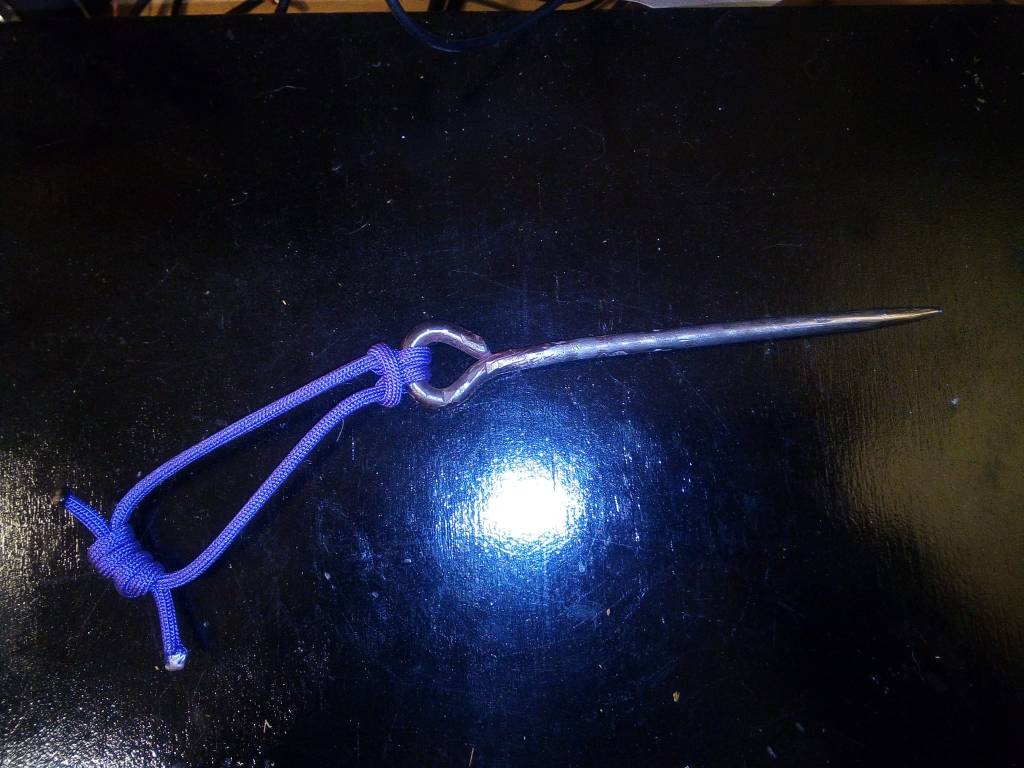

But coincidentally, YouTube videos on knots, came across the Marlinspike hitch. Is a really useful thing, how to easily attach a cord to a stick or whatever to get the ability to pull much better. Naturally, Wikipedia, is derived from fishing stuff. Hemmingway innit. And Marlin Spike is a thing fisherman use to free knots. Basically a spike with a rounded end, often curve-diamond shaped that you can stick in to free the knot.

There is a whole community of paracord weavers (both creative and survivalist) and obviously a product range on this.

Ok, I have a hacksaw and bench grinder, fashioned an 8-inch nail into that shape. (Nine Inch Nails is boastful).

Bit inconvenient, spike. Need a loop in the end.

Now the fun starts.

Last blowtorch I had, mishap. Had run out of fire lighters, so propped little blowtorch up agin logs. Back was turned literally seconds (think I was cooking, in clear sight, just not noticing).

Oops. But luckily no boom!

New blowtorch, great fun.

Really good tip I learnt from my school metalwork (yes, I went to a school that had a forge, aside from that was a shithole), to make a loop, bend it straight 90 degrees first, then bend back.

Starts wonky –

Measure the girth first. Humans are very bad at estimating.

Bendy-bend.

So, the hitch –

and nearly-finally (I need a cork or something on it in case of running-with-marlinspike tragedy) –

Not Climbing : Mattress Rescue

Now lockdown is easing in the UK my mother has starting making noises about coming over. She usually visits at least once a year, but her health’s not been great the past couple of years and, ermm, pandemic. I mentioned this to Marinella this morning, she asked, “where’s she going to sleep?”. Well, my bed, I’ll take the fold-down, as usual. I do have a spare bed on the soppalco (mezzanine above music room) and the fold-down couch in the kitchen, but my bed is the comfiest, and has a bathroom next to it, major bonus in my mother’s book.

Except it hasn’t been very comfortable of late. Ancient mattress, and when Claudiopup was a bit younger he enjoyed tearing it up. Bare springs in places, which I’ve worked around by piling on folded blankets in appropriate places. Has got a bit medieval.

Over in No.7, my previous house just across the lane (empty, awaiting letting or sale), there’s a perfectly good mattress – a futon I was given years ago, no doubt better for the spine. I’ve been putting off bringing it over because it seemed so daunting. Double mattresses are fairly heavy things, total nightmare to shift because of shape & bendiness and both houses have narrow, winding stairways. Two strong people needed, even then there’d be a lot of cursing. I’m not strong. Could ask neighbours who’d be happy to oblige, but don’t like asking…

But, in recent months I’ve been accumulating climbing gear. For other jobs around the place – fix roof; trim trees. I do fancy trying climbing for recreation again (after occasional attempts 30+ years ago), but that’s a just corollary of having spent $$$ on climbing gear, get the most out of it.

This mattress replacement, surely I, with the help of climbing gear on Newtonian physics, can do it without breaking my back. Give me a lever long enough…

[[Tyrolean traverse did cross my mind, very elegant solution, but most of this house would be in the way, and frankly, it’d be suicidal…]]

Step one : hugely encouraging.

I’ve watched lots of videos recently featuring not only people that fix roofs and rock climbers but also those of arborists (Rivendell with chainsaws). And high-building window cleaners. And the Americans that hitch themselves up in trees on a weird ladder setup to shoot things, presumably at half-term when the schools are empty. A brilliant bit of kit across them is a lanyard (longe, cordino). Shortish, extendable line, clip yourself in for backup safety/support.

The number of times I’ve been around climbers on crags in the Peak, had to look away. They’re hopping around unroped right on the edge of a potentially fatal drop. When so easy to be safe.

So I got a 3m/10′ bit of dynamic rope, a double-lock Via Ferrata-style carabiner and a Kong Slyde, a minimal little plate that allows the length to be adjusted easily (funnily enough Kong mention their use as energy dissipators, but I for one wouldn’t trust one for a Via Ferrata).

So versatile. Slapped on a pulley, hoist the mattress into a roll, lock it with (120cm) slings held by quickdraws.

At this point, 90% of the hassle of shifting a mattress has gone.

Next, set up anchors for my speedline (hah, terminology).

I put a bolt into my bedroom window ledge and attached a knotted rope some years ago. I guess around the time there were the nasty earthquakes around L’Aquila, not all that far away, bit of (rational) paranoia. That end sorted. Sling around a sturdy bush at the other side of the yard. Rope between, this one a proper dynamic climbing rope. Frictions knots and pulley to tension. Very ad hoc, but worked.

BIG POINTS IF YOU CAN NAME THE SIX KNOTS (one used twice). I only knew one of these a few weeks ago. Thank you weird YouTube people.

I’ve already been surprised by how stretchy dynamic rope is, but that was with my weight on it. Mattress must weigh a lot less, but still, boing! This photo is staged – I pulled in more slack.

Note the blue lanyard used again, this time to stop it swooping down to far (it did anyway).

I took it down, put some crappy nylon rope around with bowlines to keep the roll and bunged it in the cantina. Either for the end of the month to put by bins when big things are picked up or to use as a bloody awful bouldering mat.

Next, new mattress. Same tying procedure. Even though it looked like heavier material, I guess without all the iron of archaic European mattresses it was much more manageable.

Here I made A STUPID BEGINNER’S MISTAKE. For an anchor I’d looped the rope around the bricks & mortar divider between the windows, I think with a hitch so it’d dangle a bit and balance. I used a locking carabiner. DIDN’T LOCK THE CARABINER.

Early on it crossed my mind a lot of this looked like mountain rescue stuff. Where I grew up in Derbyshire, that, and cave rescue were things. Similar terrain & issues here, but about 5km from here the Protezione Civile guys have a helicopter. Doubt they’d take a callout for a stuck mattress.

Right here you have a very bad situation. On loading, the rope etc. got messed up, jamming the anchor carabiner open and the rope stuck. Freeing the rope might have caused the casualty to fall to their doom. In this case, no human life involved, but it was lucky a lovely old planter at the top of the steps didn’t get knocked off.

I was able to hook the lanyard just below the bad carabiner, yank it from above and it clicked back properly (lanyards ftw!).

Taking it to it’s new home, hooked back onto the rope there.

Retensioned. Attached the other rope from bedroom, naively thinking haul it up.

Ok, not that naive. Did occur to me at this point that it really should be able to clear the window ledge. So stuck a couple of screw eyes into the beam in the bedroom, rigged anchor to haul it up higher.

Hmm. No clearance whatsoever.

I swore twice. Once with this realization, second time on catching myself on some dangling thorns. I tried putting another screw eye into a rafter just above, outside the window. But the wood started splitting, thought better.

Now properly stuck, in the abstract sense. Spent ages just trying to haul, (just using lanyard & the remaining carabiners with friction knots on cords).

Last night I heard mention on the radio of whalers, apparently Arthur Conan Doyle worked whaling ships to make a bob or two before doing Sherlock Holmes. On my mind, got a steel fence stake and trying hauling him aboard. No joy.

Gave up. Let Moby Dick go free. Lowered down, unclipped.

I guess because I had been pulling a lot, it didn’t know feel too heavy. Pulled it over my back, took it upstairs without any trouble, Igor stance.

Job done.

This took hours. But apart from getting the job done, was really good for learning. I need to be totally familiar with all this stuff before taking on the tasks where there will be genuine risk (however small). I came close to damaging plant life this time, def don’t want to damage mine. Some lack of foresight – making it up as I went along didn’t entirely work (though I never went to a position I couldn’t back out of). Outrageously stupid mistake of not screwing up a key carabiner. Also, you can never have too many carabiners.

Hope this thing is comfortable.

No Such Thing as a Coincidence

I’m only now making a start at preparing the orto (veg plot) for planting. My neighbour Achille did his annual favour of digging it over with his little excavator a good few weeks ago. It would have been better if I could have started say 3 weeks sooner, but the weather has been almost continuously wet until yesterday. Suddenly, hot & sunny! So yesterday I made a start on clearing the brambles around the edges.

For reasons I won’t go into here, just now I wanted to measure out the main area. My workshop metal tape measure only goes to 5m but I remembered an antique surveying kind of measure my dad gave me years ago. Imperial units of course, but one side was mostly blank, so I marked metres on there.

I was pleasantly surprised that the main area was almost exactly 10 x 5m (2 x 1 poles).

So I put in a couple of strings to mark it out, guide me for clearing, hoeing & raking.

As I was doing this, looking around, the tidyness of the figures impressed me more. Coming from the house there’s the human lawn (dog lawn is in the yard) which demarcates one side. Looking that way, the neighbour’s field is a terrace on the right, a wire fence at the bottom of the rise on my side. Another fixed side.

But looking along, beyond is the tiny field that’s also part of this property.

So the line there is totally arbitrary. But I wouldn’t have wanted to go much further, following the neightbour’s side there’s a big elder bush (well, remains of – Achille took his digger to it a bit while clearing) then a row of unmarked pet graves.

On the other side, there’s an area designated as being a chicken run, as and when I get around to populating the shed. Right now it’s overgrown with brambles and a cherry tree has sprung up, but essentially that side of the orto could have gone anywhere. Further along, plenty of space between the current orto and a little access track then a village lane.

So how come almost exactly 10 x 5m? Finally it clicked. Because I’d decided it should be 10 x 5 metres. I believe I initially put the fences up long before moving in here, it wasn’t an orto before. Perhaps 5 x 8 metres. I extended the length 3 or 4 years ago, obviously choosing a round number. D’oh!

Convenient though.

Line of Doody

Short post to mention the ‘bad apple’ narrative around the police.

Setting the scene : two very significant precursors of modern police were the cops employed by West Indies merchants on the Thames and slave patrols in Carolina.

There’s a dichotomy within the police between their job as crime-preventors, protectors of the public, and their role as the domestic strong arm of the state and associated (many financial) institutions.

Thing is, the typical media narrative is that of the bad apple. Even if the corruption in a drama like Line of Duty goes right to the top, it’s placed as something exceptional.

The well-documented ‘Firm within a Firm’ of the Met. CID in the 1970’s, criminals effectively in the employment of coppers of all ranks, is a classic case of widespread corruption. Win-win for those involved, so such things are presumably fairly common. But I’d suggest this narrative fails to mention the more sinister purpose of the police, as the government’s militia. A delightful phrase I found on Wikipedia is ‘the monopoly on violence’. Think about it.

I personally experienced bad apples in 1980’s Sheffield (luckily when it came to the cop I was alleged to have assaulted to give his evidence, he was suspended from duty for an unrelated assault incident so I wasn’t locked up for trying to defend a victim of police assault…).

Same period, Thatcher & co. mobilised the cops as their private army to harry the North, shut down the miner’s strikes (and by extension the power of their unions).

I get on well with the (armed!) cops that live near me now. Who knows what it would be like without them, people are generally honest and non-violent, but I’m prepared to accept life is better because they are here. Is rural, which makes a difference. But police proper is about the city (or City), check the etymology. Their main job is historically to maintain the status quo for the aristocracy and the merchants.

What I’m suggesting is that the things of concern aren’t only bad individuals, bad culture (like the institutional racism in the UK police). Looking at those issues, you’re distracted from the more fundamental fact that they are a quasi-militia that serves the state, wherever it prefers to go.

Incidentally –

‘chauvinism’ : named for Nicolas Chauvin, a legendary and excessively patriotic soldier.

Not Climbing

For a few years now I’ve had a leaky roof. It has to rain a lot to be an immediate issue (puddle on bathroom floor) but this last winter there were a couple of days with torrential rain, the ceiling is starting to look dodgy. I’ve put it off and put it off, because the only options seemed to have been to pay a company to erect scaffolding, at a cost of €1000s, or to ask a local odd job man to kill himself for about €8 an hour.

Thing is, it’s not, on the face of it, a very big deal at all. The roof here is done in the traditional local style, terracotta tiles held on by gravity alone (aided by a few rocks around the perimeter). The problem area is only a few metres away from easy ladder access.

But…on one side it’s a 5m drop onto a yard paved with flagstones, the other 6m onto a concreted track.

Hmm…

When we got this place there was a lot of work needed doing (it was basically a shell), but we didn’t need to move in immediately. So despite having only minimal skill at woodwork I decided that I’d tackle the bits that seemed manageable myself (stairs, windows…). Spend the money that would have gone to proper craftsmen on equipping a little workshop.

That worked out remarkable well. The results would not win prizes for aesthetics, but I got (mostly) functional bits in place. (Mostly, the window frames are far from perfect, bit gappy, but still adequate). This is an old house in a rural hamlet, rustic is acceptable.

So there’s my Plan C for fixing the roof. Get the necessary gear, do it myself.

I believe I have zero experience of roofing. But years ago I did wind up spending a lot of time in the company of expert climbers in a shared house in Sheffield (entertainingly documented in a short British Mountaineering Council documentary video, Statement of Youth: Hunter House Road and the Dole). I did have a go at climbing myself a few times around that period, only very easy stuff, but enough to get an idea of how it was done.

I am scared of heights, but only much bigger ones than this (that weird compulsion to jump off terrifies me). In this case, I’m scared of hurting myself.

Gear then. Wait, first, the problem…

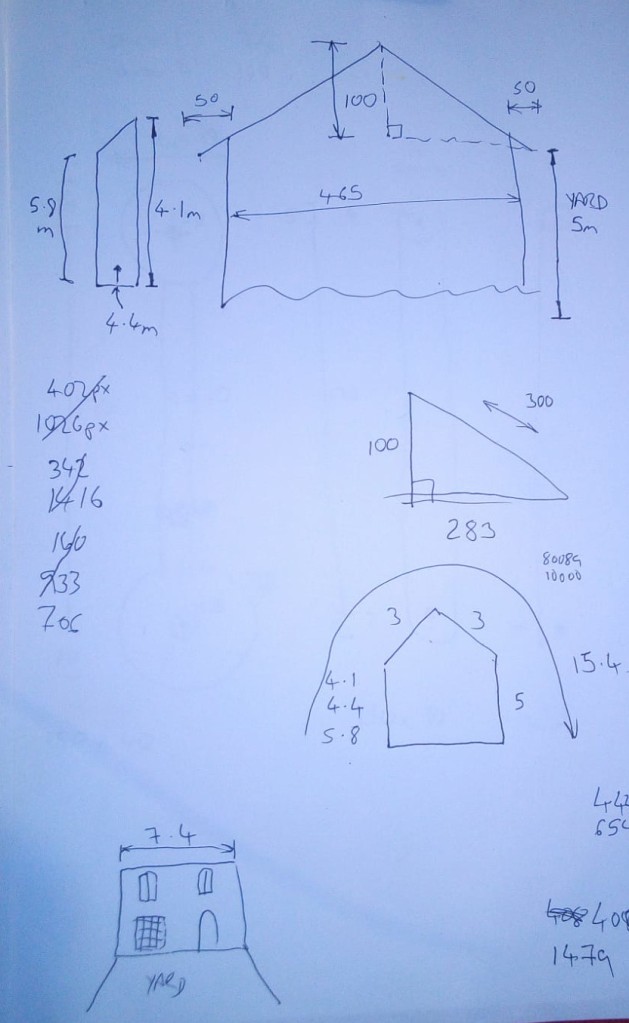

Biggest problem is, as you can see in the photos above, virtually nothing on top that could serve as anchor points. A very old, crumbling chimney stack, that I doubt a gecko would trust.

Measuring up (taking photos including a tape measure, using Gimp editor to measure other bits) –

I reckon if I put a line over the middle of the section, bolts in the wall lane side, to be decided yard side, that’ll give me an anchor point at the apex with a fair bit of clearance if I was to take a tumble.

Thing is though, this is Not Climbing. There, the typical task for a rope would be to catch you if you fell. Here, main thing is not to fall.

Now gear…

Climbing gear is so sexy. I’m tempted to get loads just to hang on the walls as ornaments, sit on my arse.

Budget is limited, so I want the minimum that will make me feel safe. Proper helmet, natch – that’s a multi-justifiable buy, I’ll be getting the bicycle out of the cantina very soon, seems daft not to wear one.

One the right, a dirt-cheap rope advertised, very irresponsibly, as being for climbing. The aforementioned gecko wouldn’t go fishing with that. The carabiners are truly awful – might be handy to use with dog leads, maybe, not even sure about that. But the rope itself is plenty strong enough to take my weight without a jerk. Will do for the High Line (not that kind). This is Not Climbing.

As a realistic concession to safety, the rope on the left is a proper UIAA-certified dynamic rope. As long as I have a reasonable amount of redundancy in the anchors, their individual strength doesn’t matter too much. Like, I will put a loop on the dodgy chimney, and bolts at the start. Effectively walk-abseil across the roof, Figure-of-8 belay device backed up by at least one Prusik.

I opted for one of the Petzl Figure-of-8s. The ‘rescue’ type, with the horns, I would have preferred, but I want that to be solid beyond question and the reputable brand ones are pricey. Nor do I want to wind up locked on a trad figure-of-8, needing to be rescued. The square-looped Petzl seemed a good compromise. (I will carry an extra loop or two for Prusiks, just in case).

There’s no rush to do this, any time before autumn rain will be be fine. There are some little crags over the back where I can practice abseiling, maybe find an easy route or two. Try and build a bit of confidence. (Also hopefully strength – I’m ultra-weedy at the mo).

It is a recurring problem, so if I have to I’ll get more gear. Not sure I’ll be thinking about this other tile slippage this year though.

PS. Oh yeah, harness. My darling Marinella has her old hang gliding harness on offer. I’ll have to have a look, attaching from the back seems a little kinky. Can get good quality climbing ones <€50, which is still within my woolly budget. Tempting, but for something I may only use 2 or 3 times (hmm, maybe annually thereafter…), hard to justify. This afternoon I had a play (following YouTube instructions) on doing a ‘Swiss Seat’ harness. The video seems to suggest serious genital disfigurement, but it did actually feel quite comfortable, definitely secure. It’s also very easy to make a chest harness with a sling (YouTube again), combined with that Seat I reckon that’ll be fine for occasional use. So have ordered a couple of decent slings, couple more crabs.

PPS. My old mucker Stephen (who tends to know about things like roofs) commented asking whether I could check the roof wood (timber, er…he uses other words I don’t know) from inside. The main beam is open, poked with screwdriver, rock hard. Same beam continues above my bed. I slept there for 5? years before noticing it has a date carved in it, I think says “A 1798 D”.

Demonic Electronic Firefly (3v Flashing Joule Thief)

A few weeks ago I gave my girlfriend a Kinder Egg. She gave me back the (turtle!) toy container, suggesting I might have a might have a use for it. Of course I had: lucciola elettronica!

Aside for having the Best Name Ever, the Joule Thief is a remarkable little circuit:

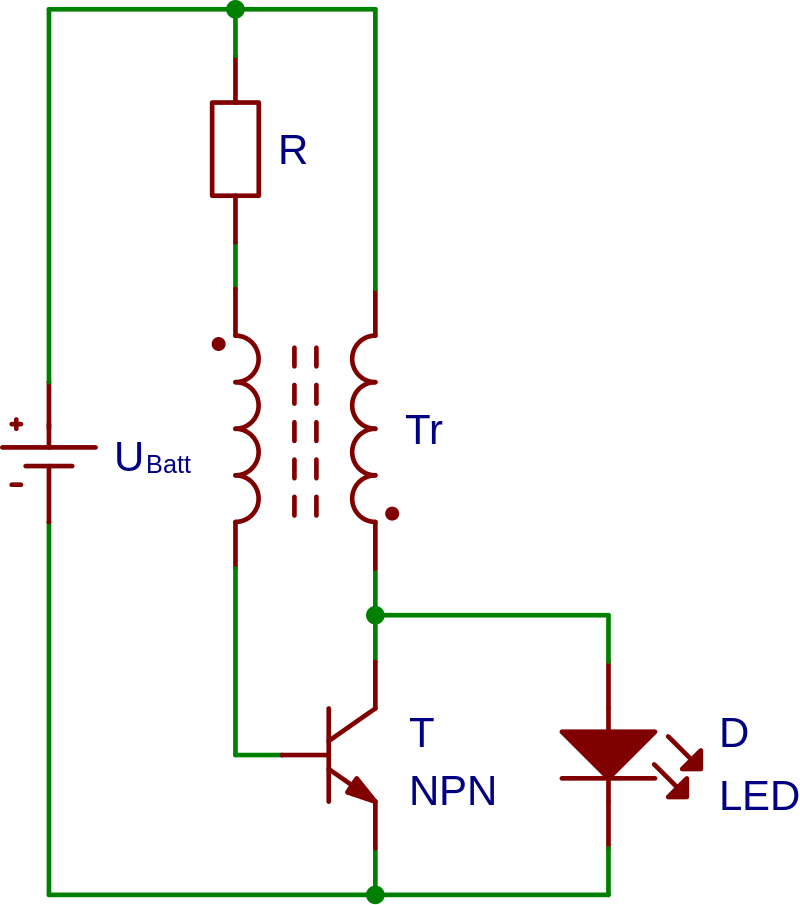

[Pic from Wikipedia by Rowland, CC BY-SA 3.0]

It’s a switching boost converter that can run from a very flat 1.5v battery and ‘constantly’ light an LED at a very low average current by delivering a chain of very brief pulses. Demonstrates how close to magic even the apparently simplest electronics can be.

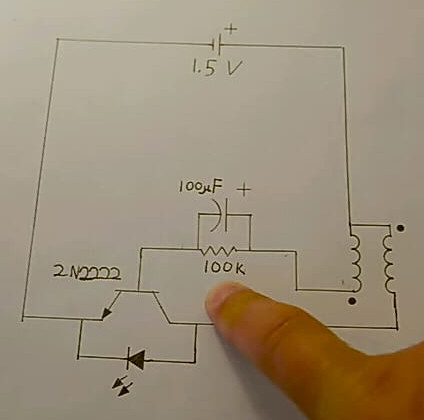

A video by Eman2000 had been recommended to me for a Blinking Joule Thief. Essentially the same circuit as above, but with a biggish capacitor added to stretch the timing. Yeah, that’s what should go in the pod.

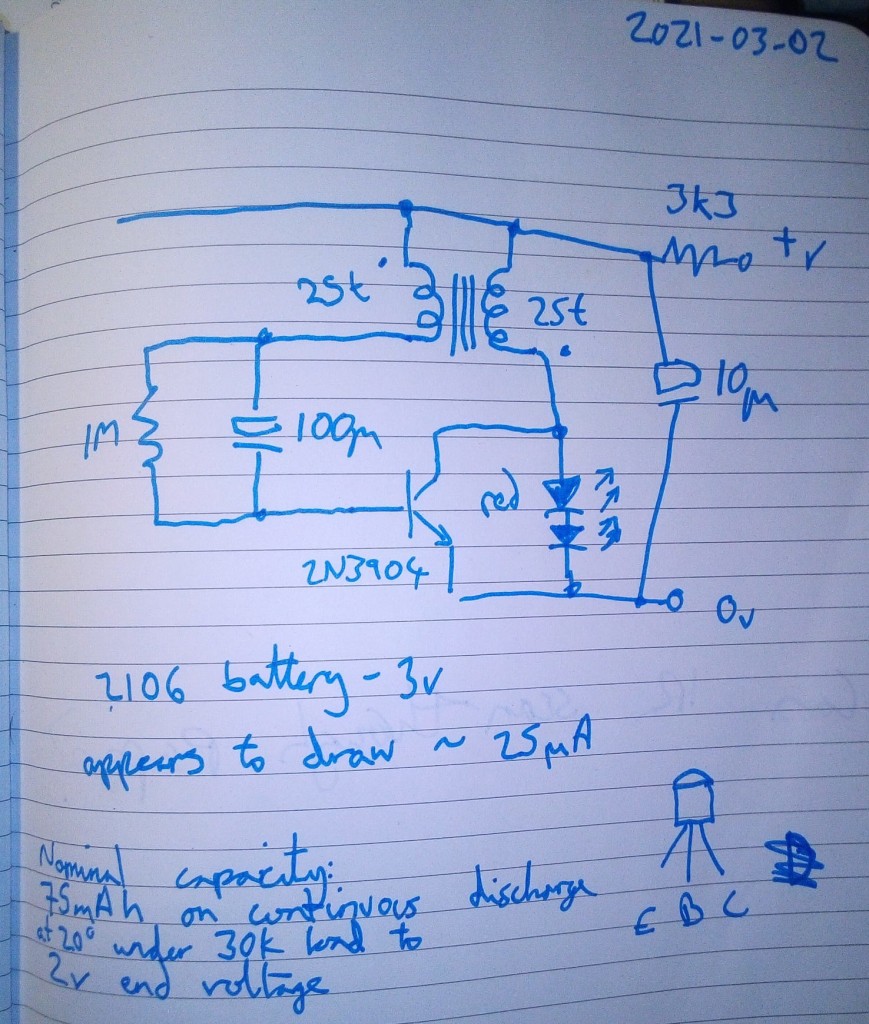

But, part of the alchemy depends on the relative voltages around the transistor & LED. The original circuits work for a <=1.5v supply. I only had a 3v (2106) battery that would fit in the Kinder pod.

Because it’s Dark Art material, I didn’t bother analysis or trying a sim in Spice (which almost certainly wouldn’t be remotely accurate), went straight to breadboard.

Snipped a little toroid off an old burnt-out PSU, wound on 2×25 turns of thin wire (Wikipedia suggests 20t, but because I was a bit sloppy threw in an extra 5 for good measure). Went for the trial & error school of design. Starting from Eman2000‘s 1.5v blinker I had a good play.

So…first observations : the capacitor/resistor sizes bear little relation to the timing compared you might expect, seriously non-linear. At 3v the LED appears permanently lit (I think most likely blinking at a high frequency, but I didn’t bother to check).

Semi-logically, I started by tweaking the LED (the loading is significant) – variations on colour, series resistance, series diodes for voltage drop etc. Two series red LEDs seemed most promising. Scientifically changing several variables at once, I thought maybe reducing the current from the battery might help. Yes & no. It made quite a difference but only approached what I was after when backed up by a capacitor. I tried a couple of other transistors, it didn’t seem at all sensitive, probably any small-signal bipolar would work. I didn’t bother playing with the transformer, too fiddly.

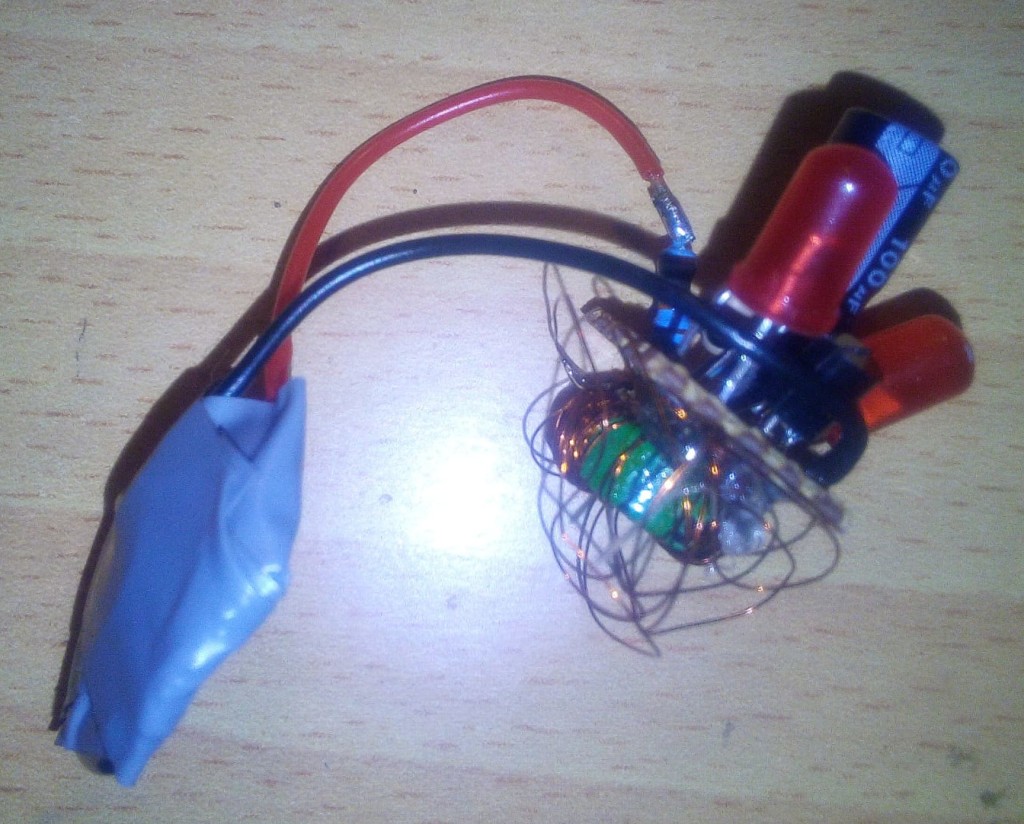

This is the circuit I settled on:

It flashes a couple of times a second with either 3v or 1.5v. I don’t believe the measured current because it was a very intermittent draw (I know, should’ve got it on the scope, but I just wanted to get it done). Probably more like 3 x 25uA, which would suggest 1000 hours before the battery reaches 2v. That’s a guess. But given that it can work below 1.5v, it should last a month or two, if not a year or two.

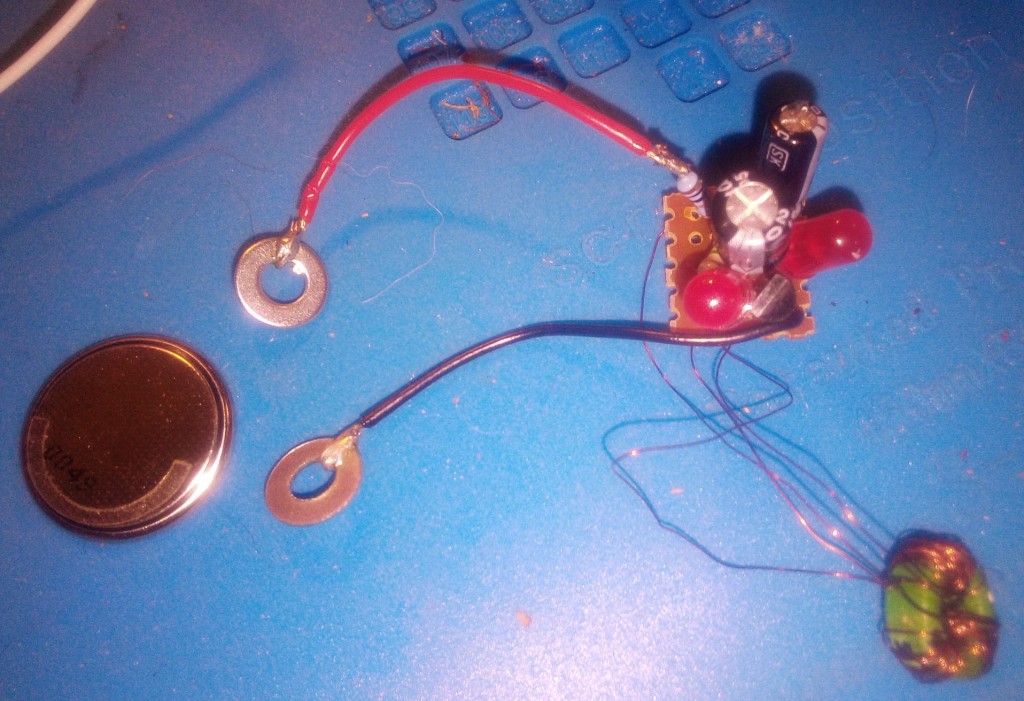

Soldered up on a tiny scrap of stripoard:

Bit of hot glue & tape:

Then it crossed my mind, where’s she going to put this? Fridge magnet! So I hot-glued 3 tiny magnets into the Kinder pod.

Turns out there are much more hi tech approaches to similar problems, ATtiny45 EverLED and TritiLED both use microcontrollers for very long life little lights.

It is a little creepy in the dark. Leave the radio on.

Boost/Buck Converters for a Split Rail Supply

tl;dr No.

I like op amps and they are best served with a 0v-referenced split rail supply, say +/-15v.

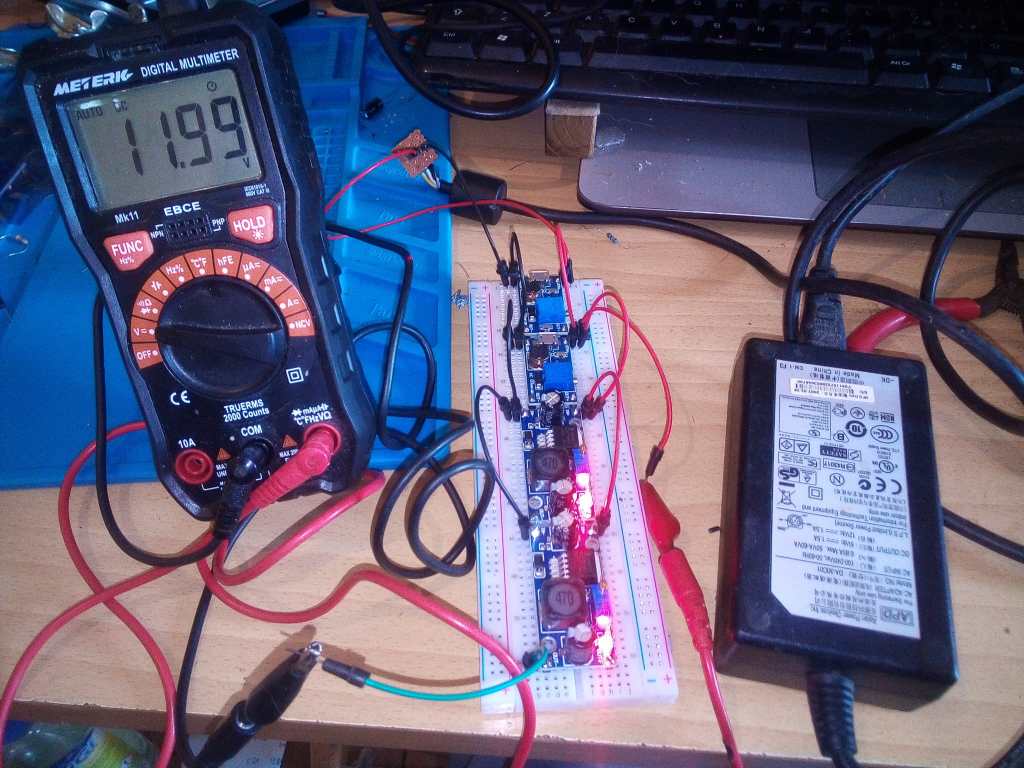

I’ve got a bag of cheap, adjustable, switching boost converter modules [1] and a bag of cheap, adjustable, switching buck converter modules [2]. I have an old laptop power supply with 12v out. Can I get +/-15v?

First (mildly irritating) snag, the boost converter will only pump the 12v to 28v.

Second (killer) snag, they aren’t isolating. They have a common negative line.

Doing 12 -> 28 -> 14, then using the 14 as 0 ref nearly works. With balanced 680R resistors (ie. 20mA load on each rail), it stays very close to +/- 14. But loading just the upper segment does knock it out of balance (unsurprising, I guess). With 340R (40mA) it swung it to -20/+8. So it’s assymetrically sensitive.

That suggests that in practice it would probably be better to split the 28v with a resistive divider buffered by an op amp. (I have occasionally experienced instability doing this in the past, a little care and/or trial & error is needed with decoupling capacitors).

Incidentally, most of the electronics I do is audio/music-related. When I first encountered switch mode PSUs many years ago I avoided them like the plague, the switching frequency would often interfere with the signal. Until very recently I’d opt for a trad transformer with a linear regulator (78xx/79xx or the slightly noisier LM317/LM337) or a similar discrete circuit. But being tempted by the low prices and high efficiency the modern devices, I discovered they’re fine. At least those for low-voltage conversion use relatively high switching frequencies and so are entirely reasonable for audio applications (though shielding might still be desirable).

The modules I have are generic Chinese-manufactured things, even ordered from a European supplier via Amazon they come in less than €2 a piece, probably cents from a Chinese supplier. Specifically :

[1] Boost converters, based on B628/MT3608, module labelled HW-183.

2-24v in, ?-28v out, 2A. Switching freq : 1.2MHz.

[2] Buck converters, based on LM2596, module labelled HW-411.

3-30v in, 1.5-35v out, max out 3A. Switching freq : 150kHz.

The current ratings for these should be considered as absolute max. Personally I’d not even bother trying them if >1A was needed.

Simple Drawing Robot, Python Sums Weirdness

The WHO have an Adult ADHD Self-Test. It’s very short, 18 questions and “Six of the eighteen questions were found to be the most predictive of symptoms consistent with

ADHD.”. Number 6 is :

How often do you feel overly active and compelled to do things, like you were driven by a motor?

I’ve used Arduinos & similar microcontrollers before, until now it’s been purpose-driven. But a few weeks ago I impulsively bought an Arduino Starter Kit and a bag of 37 compatible sensors. Oops.

There are easily 10 higher priority things I should be doing, but I got sucked into this.

I’m new to servos but after finding it very easy to make a Cat Annoyer using one (and an IR proximity switch) I wanted to try something a little more ambitious, a plotter using 3 servos using an interesting mechanism:

I made a very rough prototype and it became clear (amongst other things) that I needed to know what would be good dimensions for the arms.

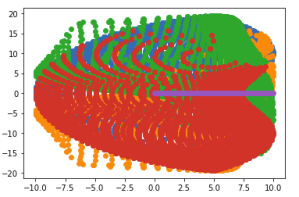

So I coded up the formula from Study and Development of Parallel Robots based on 5-Bar Linkage in Python I got a weird result.

I’m going to pause this post here – more details are on GitHub at https://github.com/danja/mini-draw. (I want my rambling informal background here, concise instructions over there, but still a way to got before I have the thing working). Let’s just say I didn’t get the simulation I expected.

Bitcoin, Ponzi & Capitalism

Just some passing thoughts I felt like spitting out. Coincidentally I watched another episode of Adam Curtis‘ excellent Can’t Get You Out of My Head documentary series last night.

I’ve seen talk of Bitcoin as being a kind of con, because it’s all virtual. But virtually all money is virtual since Nixon dropped the tie to the gold reserve.

A situation that Curtis brought up was that of the Appalachian coal miners in days of yore. They worked for the coal company, got paid in the coal company’s own currency which they had to spend at the company store.

Nowadays, the company isn’t so easy to demarcate. But a miniscule percentage of people control the vast majority of wealth. It may be someone middle-class that owns the store, but they are still company employees. It’s the company‘s definition of money everyone has to use. Hard to point the finger very precisely, but a vague direction might be bankers, the uber-rich and most politicians. Oh yeah, and the management of Google, Facebook, Amazon & co. It’s popular to blame the mass media for a lot of things – but I reckon they’re more like symbiots, creatures down the scale that live on the pickings of the bigger fish.

That may sound verging on conspiracy theory, deep state etc. But it seems to run deeper than that, and isn’t even hidden, not even in plain sight, it’s apparent all around.

Such a system might be considered just the way things are, the lesser of various evils (fascism, USSR-style communism), at least for folks in wealthy Western nations.

But a fundamental flaw in this setup is that it’s inherently unsustainable. The generation of wealth currently comes from the exploitation of natural resources, and the way that’s currently done, they are finite. A side effect, of greater concern, is that the climate is getting messed up.

A crude umbrella term for this system is Capitalism.

I’m no political theorist, not sure I’ve read more than a page of Marx or a paragraph of Adam Smith. But as a student I was briefly drawn into a pyramid scheme (not long enough to lose more than a tenner, but still). Is a well-known company, I forget it’s name. Cleaning products mostly, you make your money by getting other people to sell the product for you. But these systems collapse because there isn’t an infinite tree of sellers/customers. Well not collapse exactly, the money gets funneled uphill.

Seems to me the current use of the planet has a lot in common. The resources of people further down the tree, in time, are being funneled into the profit now. People in the past have being sold a future based on the wealth of people now, and in turn we’re sold of that of those not even born yet. In this context wealth equates to livable conditions and breathable air.

Count as a rant? Whatever. Penned it, can get back to consuming.

PS. Bum. I used Bitcoin as clickbait, didn’t really follow through. It’s just an extreme form of same-as. Extreme in the fact that it cosnciously seeks to waste natural resources to make (mine) money. If an alien wanted to turn this planet into a place more hospitable to sulphur-breathing hell beasts, perfect invention.

Incidentally, long before I knew anything about Bitcoin, my old mate Reto came up with an eco-alternative : Greuro